Long-Tailed Anomaly Detection with Learnable Class Names

1UC San Diego, 2Mitsubishi Electric Research Labs (MERL)

Overview

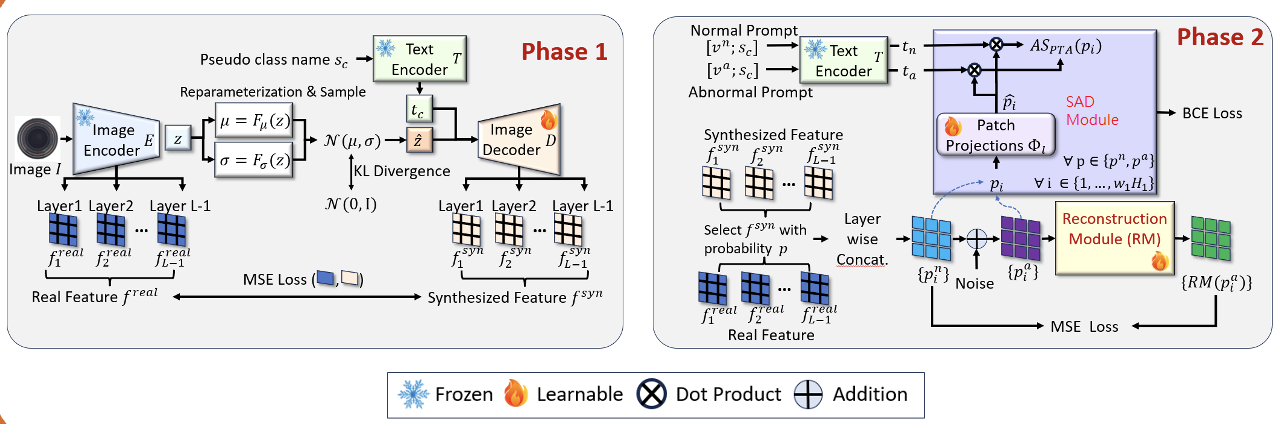

Anomaly detection (AD) aims to identify defective images and localize their defects (if any). Ideally, AD models should be able to detect defects over many image classes; without relying on hard-coded class names that can be uninformative or inconsistent across datasets; learn without anomaly supervision; and be robust to the long-tailed distributions of real-world applications. To address these challenges, we formulate the problem of long-tailed AD by introducing several datasets with different levels of class imbalance and metrics for performance evaluation. We then propose a novel method, LTAD, to detect defects from multiple and long-tailed classes, without relying on dataset class names. LTAD combines AD by reconstruction and semantic AD modules. AD by reconstruction is implemented with a transformer-based reconstruction module. Semantic AD is implemented with a binary classifier, which relies on learned pseudo class names and a pretrained foundation model. These modules are learned over two phases. Phase 1 learns the pseudo-class names and a variational autoencoder (VAE) for feature synthesis that augments the training data to combat long-tails. Phase 2 then learns the parameters of the reconstruction and classification modules of LTAD. Extensive experiments using the proposed long-tailed datasets show that LTAD substantially outperforms the state-of-the-art methods for most forms of dataset imbalance.

Models

LTAD Architecture: (Left) Phase 1 of the proposed LTAD learns a VAE-style decoder for feature augmentation conditioned on a learned pseudo class name. (Right) Phase 2 of the proposed LTAD training learns the parameters of the reconstruction module (RM) and patch projections that map visual features into the semantic space of the semantic AD (SAD) module.

Dataset

Dataset is available on zenodo.

Acknowledgements

CH and KCP were supported by Mitsubishi Electric Research Laboratories. CH and NV were partially funded by NSF awards IIS-2303153 and gift from Qualcomm.