MicroNet: Towards Image Recognition with Extremely Low FLOPs

Yinpeng Chen2

Xiyang Dai2

Dongdong Chen2

Mengchen Liu2

Lu Yuan2

Zicheng Liu2

Zhang Lei2

UC San Diego1, Microsoft2

Overview

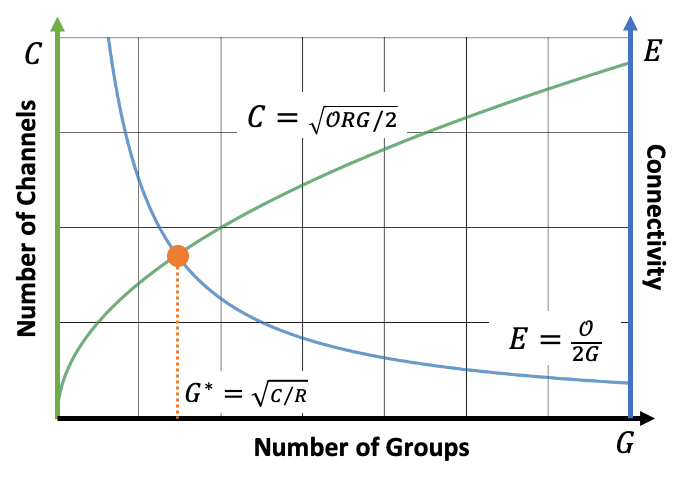

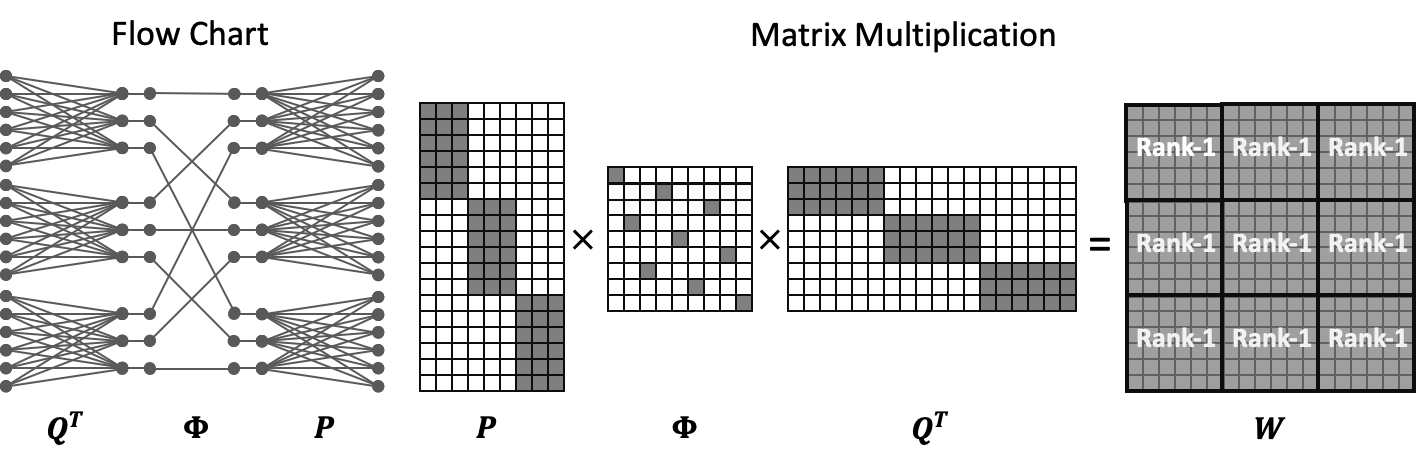

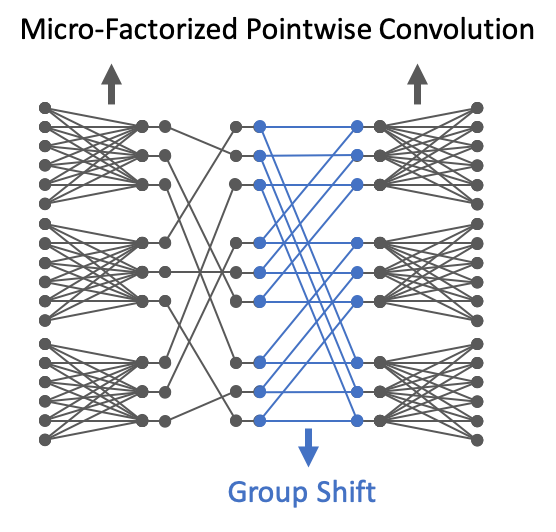

This project aims at addressing the problem of substantial performance degradation at extremely low computational cost (e.g. 5M FLOPs on ImageNet classification). We found that two factors, sparse connectivity and dynamic activation function, are effective to improve the accuracy. The former avoids the significant reduction of network width, while the latter mitigates the detriment of reduction in network depth. Technically, we propose micro-factorized convolution, which factorizes a convolution matrix into low rank matrices, to integrate sparse connectivity into convolution. We also present a new dynamic activation function, named Dynamic Shift Max, to improve the non-linearity via maxing out multiple dynamic fusions between an input feature map and its circular channel shift. Building upon these two new operators, we arrive at a family of networks, named MicroNet, that achieves significant performance gains over the state of the art in the low FLOP regime. For instance, under the constraint of 12M FLOPs, MicroNet achieves 59.4% top-1 accuracy on ImageNet classification, outperforming MobileNetV3 by 9.6%.

Models

Architecture: Micro-Factorized Pointwise Convolution of MicroNet.

Architecture: Dynamic Shift-Max of MicroNet.

Highlights

Extremely efficient neural architecture design given by the micro-factorized convolution and dynamic shift-max.

Benefit

- Micro-fatorized convolution achieves the best trade-off between the network width and connectivity.

- The dynamic shift-max gives a much stronger non-linear activation function to compensate the reduction of non-linearity caused by the removal of network blocks.