| Home | People | Research | Publications | Demos |

| News | Jobs |

Prospective Students |

About | Internal |

| Top-down Discriminant Saliency | |

|

|

|

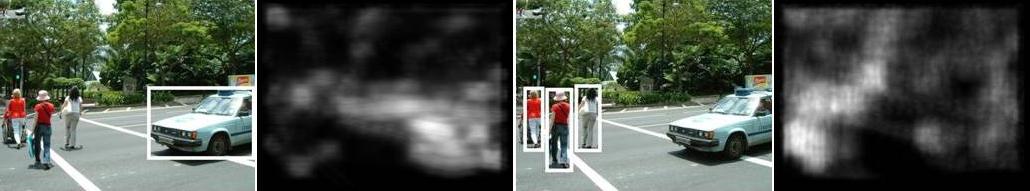

Biological vision systems rely on saliency mechanisms to cope with the complexity of visual perception. Rather than sequentially scanning all possible locations of a scene, saliency mechanisms make locations that merit further inspection "pop-out" from the background. This enables the efficient allocation of perceptual resources, and increases the robustness of recognition in highly cluttered environments. In computer vision, saliency detectors have been widely used in the design of object recognition systems. In these applications, saliency is often justified as a pre-processing step that saves computation and improves robustness. However, most of these detectors are based solely on bottom-up processing, i.e. purely stimulus-driven, and do not tie the definition of saliency to the top-down goal of recognition. For example, saliency is frequently defined as the detection of edges, contours, corners, etc. In result, the detected locations do not co-occur with the objects of interest. In this work, we introduce a computational definition of top-down saliency which equates saliency to discrimination. The salient attributes of a given visual class are defined as the features that enable best discrimination between that class and all other classes of recognition interest. An optimal (top-down) discriminant saliency detector is derived through combination of 1) efficient information theoretic methods for feature selection, 2) a decision-theoretic rule for the identification of salient locations, and 3) exploitation of well known statistical properties of natural images to guarantee computational efficiency. The resulting optimal detector has been applied to the problem of learning object detectors with weak supervised (unsegmented training examples). Experimental evaluation shows that it effectively acts as a focus-of-attention mechanism, capable of pruning away bottom-up interest points that are irrelevant for recognition. In particular, this focus-of-attention mechanism is shown to have good performance with respect to a number of desirable properties for recognition: 1) the ability to localize objects embedded in significant amounts of clutter, 2) the amount of information of relevance for the recognition task that is captured by the points, 3) the robustness of salient points to various geometric transformations and pose variability, and 4) the richness of the set of visual attributes that can be considered salient. |

|

| Selected Publications: |

|

| Demos/ Results: |

|

| Code: |

|

| Contact: | Dashan Gao, Nuno Vasconcelos, Sunhyoung Han |

![]()

©

SVCL